It took longer than I expected, but I have a self-hosted installation of FreshRSS which I can access via Reeder on macOS, iPad, iOS and the web. This install has been on my to-do for a while. If FreshRSS performs well on my server, I may cancel my Feedbin subscription.

Tag: Open Source

Large companies aren’t good homes for beloved services

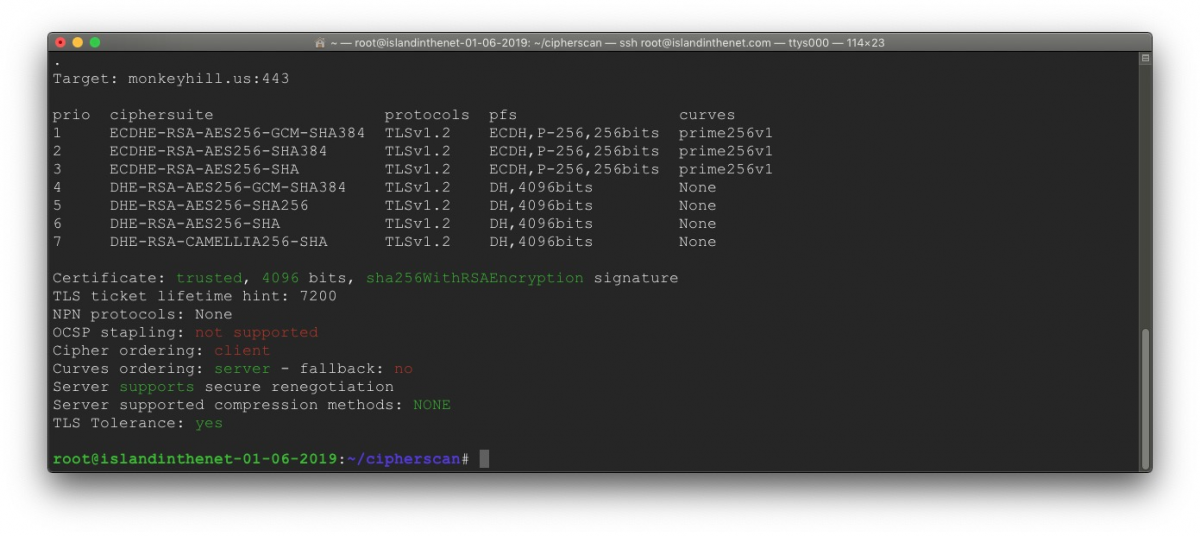

CipherScan

Using cipherscan to test the TLS certificate configuration of my web server.

Cipherscan tests the ordering of the SSL/TLS cyphers on a given target, for all major versions of SSL and TLS. It also extracts some certificates information, TLS options, OCSP stapling and more. Cipherscan is a wrapper above the OpenSSL s_client command line.

Cipherscan is meant to run on all flavors of UNIX. It ships with its own built of OpenSSL for Linux/64 and Darwin/64. On other platforms, it will use the OpenSSL version provided by the operating system (which may have limited ciphers support), or your own version provided in the -o command line flag.